Gartner Symposium is the high level CIO conference to attend. In this venue Gartner unveils new thinking including new predictions, surveys, and other insights into how people and technology will solve the problems of the future.

Highlights

Introduction to the algorithmic economy

The kickoff keynote by Peter Sondergaard the SVP leading Gartner’s Research business begun by imploring businesses to invest and create algorithms as the key unlocking insight into the vast amounts of data collected and generated. Peter went on to say how algorithms describe the way the world works, but in software, this is key to allowing systems to exchange data with one another (otherwise known as M2M). These algorithms will be used by agents such as Google Now, Siri (Apple), Cortana (Microsoft), and Alexa (Amazon). These are highly advanced algorithmically driven agents which use vast amounts of data both publicly and privately to provide an interactive and intuitive interface allowing them to predict what a user would want. These types of agent systems will define the post app world. Peter went on to describe how these opportunities are the future of computing, and represent a $1 trillion business opportunity.

The Robots Are Here

Darryl Plummer presented the trends for 2016 and beyond. They included the movement towards automated agents or robots. This includes what Gartner calls the digital mesh (devices, ambient experiences, and 3d printing) along with the application of smart machines (information, machine learning, and autonomous agents and things). These major changes will underpin the new reality IT must exist within. The cultural issues which I’ve seen transpiring in the media is the relationship between people and machines, right now this relationship is cooperative. The future holds codependency and ultimately competitiveness says Darryl.

The prediction is that by 2018 20% of all business content will be authored by machines. These machines will be fed by the IoT ecosystem of sensors, transport, and analytics. Gartner predicts that 1 million IoT devices will be purchased every hour in 2021. These not only create tremendous opportunity, but also incredible amounts of risk both in terms of personal information and security. Darryl goes with several daunting predictions around risk and security which will occur due to this digitization.

The final and most interesting trend which came up several times throughout Symposium is the use of smart agents as I mentioned above, Gartner predicts by 2020 smart agents will facilitate 40% of mobile interactions which will be the start of the post-app era.

2016 CIO Agenda and Survey

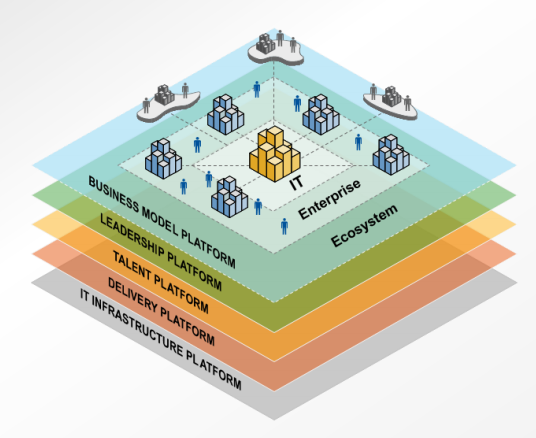

Dave Aron spoke about the freshly published 2016 CIO agenda and CIO survey. There was quite a bit of evolution here, Gartner introduced the digital platform:

The CIO survey included 2,944 CIOs managing $250B in IT spend. Clearly the revenue is already shifting towards digital today with CIOs estimating 16% of revenues are digital today. This is just the beginning of the journey, in 5 years the estimate is 37% of revenues will be digital. These digitalization changes are done to build efficiencies in operations, along with direct revenue generation.

The platform Dave laid out included aspects of bimodal delivery (on the bottom of the diagram above). The talent platform was one of the largest struggles CIOs mentioned in the survey. Skills shortages were the critical gap, and the talent shortage is being felt by CIOs. With fewer graduates in Computer Science over the last decade this shortage is likely to increase and continue.

The leadership platform also included insights into the chief digital officer. Dave touched on the fact that CDOs are being put into place, but at a slower rate than Gartner had predicted. This means that CIOs are taking on a lot of digital transformation responsibilities based on the survey data.

Private Cloud

I attended a session led by Donna Scott focused on private cloud. Based on Donna’s polling at Gartner infrastructure and operations management in June of this year 40% of respondents said they would put 80% of workloads in private cloud and 20% in public cloud. The main reasons were agility, speed, compliance, security, application performance, protecting IP, reducing costs, and dealing with politics. She then highlighted common use cases for hybrid cloud, or the use of public and private clouds at the same time. These can be split into more simplistic use cases such as running development in public cloud, while production is in private cloud or advanced use cases like active/active application resiliency.

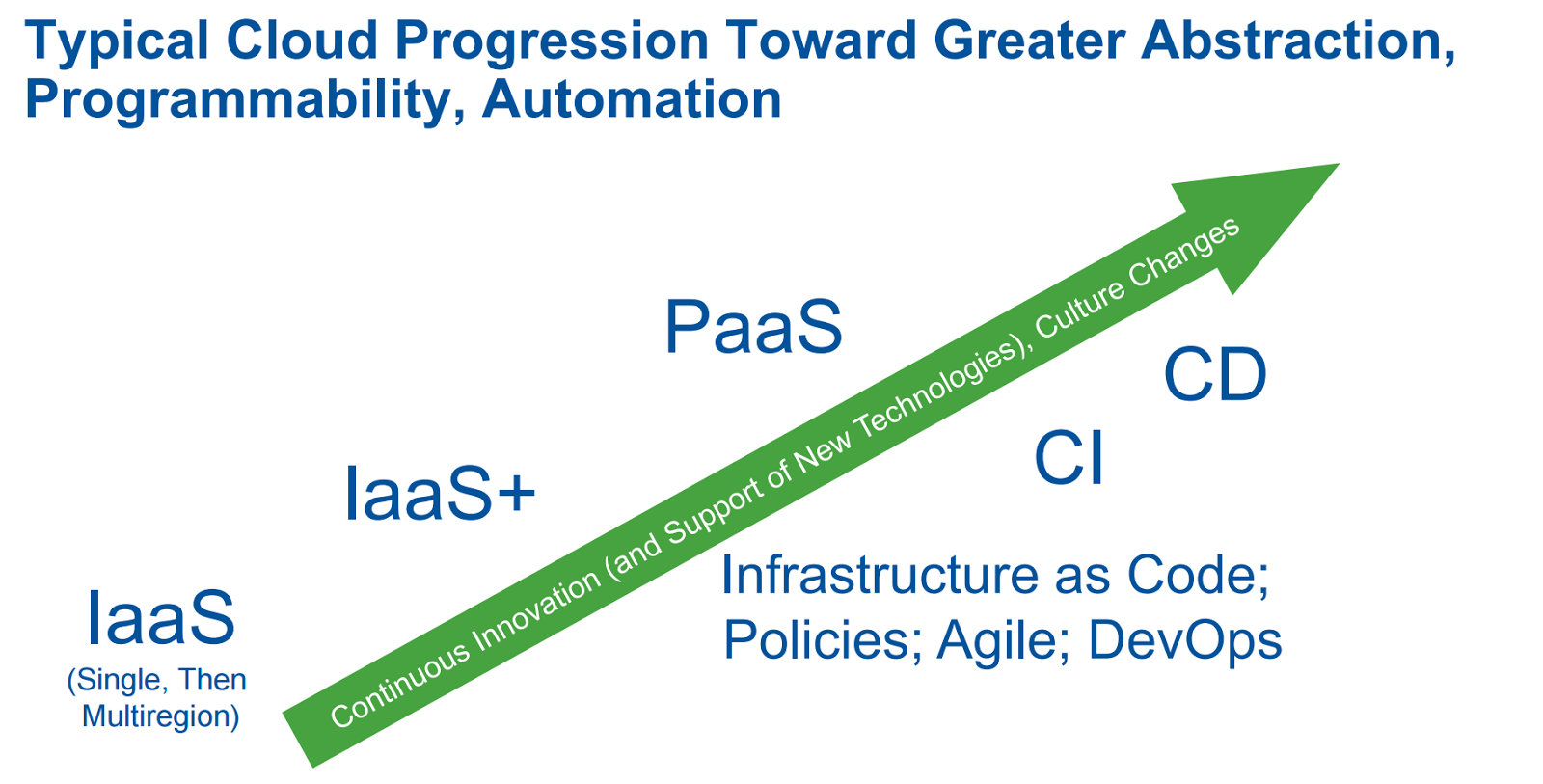

Most successful private cloud implementations are done by type A companies who embrace hyperscale and web scale computing. Donna highlighted examples from companies such as Paypal, DirecTV, Chevron, McKesson, and Cox Automotive. The progression most companies make in cloud is highly tied to application maturity:

Jeffrey Immelt — GE CEO

The interview of GE CEO Jeffrey Immelt was quite interesting. GE has over 15,000 software engineers. GE is changing its business dramatically to build a platform for the industrial internet. Immelt believes that if GE can build and create the platform to use this data and the analytics to create greater efficiencies around industry (such as transportation where GE is one of the largest manufacturer of jet engines and rail locomotives) they can create a digital differentiator within their products, and allow other manufacturers to use their platform. He also outlined how the analytics will be used to create a “digital twin” for the physical assets within the analytics layer including all relevant data and metrics for each instance of machinery. One great quote resonated with me after seeing all of the M&A within the IT Operations space: “In 20 years of M&A, more value was destroyed than created by software acquisitions”

I also tried out the new Microsoft HoloLens, which was pretty nifty. We look forward to supporting that technology within AppDynamics as it matures and is brought to the enterprise market, which is where Microsoft has been focusing.

Symposium is a great high level conference, having spoken here twice but never able to attend the sessions I’ve gotten a new appreciation for this event both in terms of breadth and quality. I still believe that it’s often too broad for many vendors and attendees, but it’s a wonderful overview to what’s happening in our ever growing world of IT complexity and nuance.

Comments